It’s becoming tricky to tell what’s real online because of AI-generated images. OpenAI, the company behind ChatGPT, is leading the way in AI development. They’ve promised tools to spot AI-made content, and now they’re delivering. Developers can sign up to test their new image detector, which can spot almost all AI-generated images accurately. But sneaky methods still exist to fool the detector.

Right now, the best way to spot an AI-made image from OpenAI’s Dall-E 3 bot is by checking its metadata. OpenAI started adding special information called C2PA metadata to Dall-E images earlier this year, marking them as AI creations. When OpenAI’s Sora video generator becomes available to everyone, it will also include this metadata. But this AI metadata might not survive every type of image editing. OpenAI has also joined a group called C2PA to improve this standard.

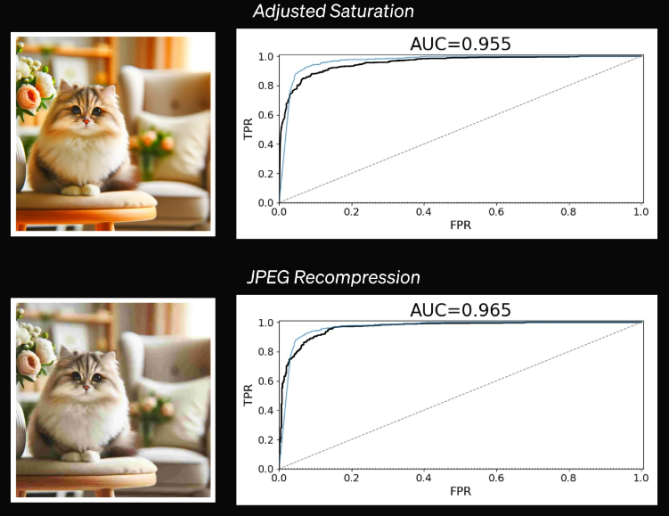

Using metadata helps, but it’s not a perfect solution. Soon, you might be able to use OpenAI’s image detector to check if an image was created by AI. OpenAI says their testing shows this detector can identify nearly all AI images with a very low chance of being wrong. Even if an image is edited, like cropping or changing colors, the detector can still catch it. But some changes, like adjusting colors or adding noise, can trick the detector. And the detector is best at spotting AI images from Dall-E. It’s not as good at spotting images from other AI models like Midjourney or Stable Diffusion.

This limitation brings up many questions. Would someone trying to make fake images even use an “official” tool like Dall-E? Other AI generators available to the public have rules to stop bad use. But someone with bad intentions might change an existing AI model to bypass those rules. Could that change the output so much that detectors like this one can’t spot the fakes?

Sadly, nobody knows how common or easy it will be to spot AI fakes. AI models keep getting better, and OpenAI is just starting to work on their content detector. They’re asking researchers and journalism groups to help test the tool to see how well it works. It’s good that OpenAI is trying to solve this problem, but it would’ve been better to have working AI detectors before everyone could make anything they want with just a text prompt.

Source: Extremetech